ARA Infrastructure

As discussed in overview section, ARA is equipped with a rich set of wireless equipment operating on a wide variety of wireless technologies. On the access network side, called as AraRAN, the testbed includes Software Defined Radios (SDRs) and Commercial-Off-The-Shelf (COTS) platforms operating in frequencies ranging from low-UHF to millimeter wave (mmWave). In addition to RAN, ARA provides a wireless backhaul between the sites using high-capacity Free Space Optical (FSO) links, mmWave, and microwave links.

Radio Access Network

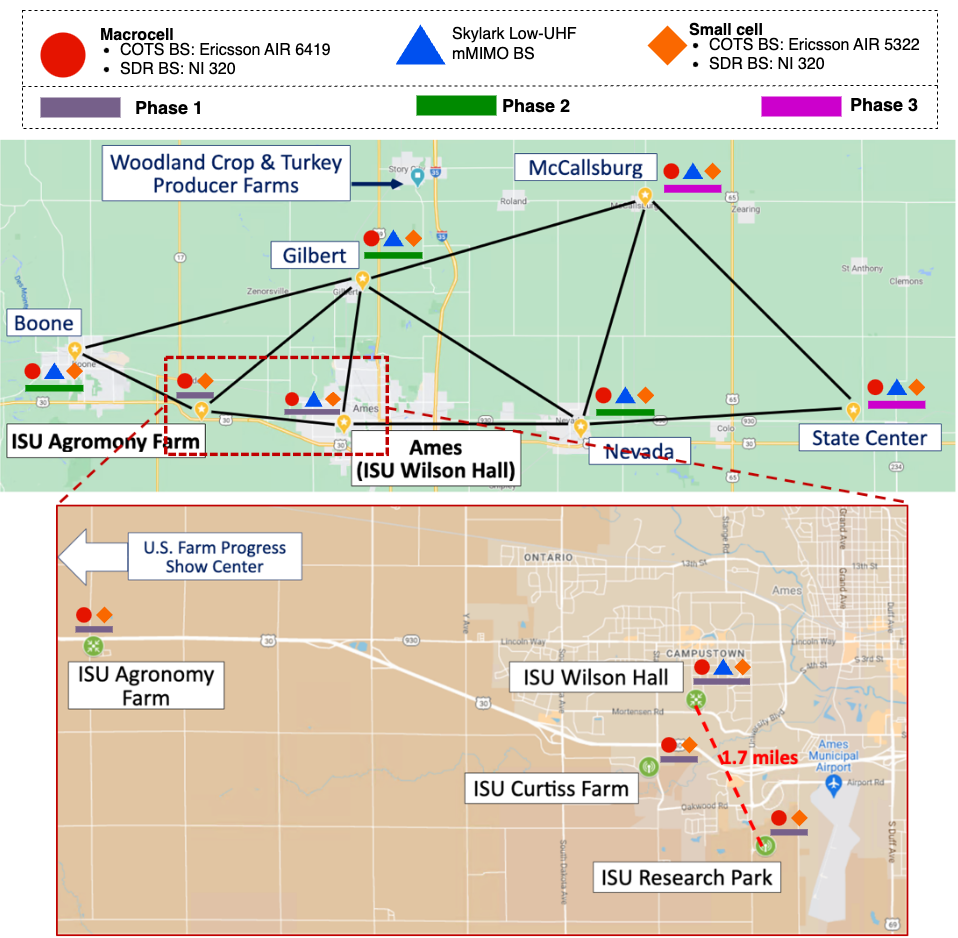

The Radio Access Network component of ARA, called AraRAN, includes several wireless technologies that are deployed around Ames, Iowa at specific locations. The components include fixed base station sites as well as fixed and mobile user equipment. Major AraRAN components include:

NI SDR Base Stations (BS) and User Equipment (UE)

Skylark Base Station and Customer Premises Equipment (CPE)

Ericsson Base Stations and User Equipment

NI Base Stations and User Equipment

AraRAN includes Software Defined Radio (SDR) based BSes realized using NI USRP N320. The BSes are deployed at four locations: (1) Wilson Hall, (2) Curtiss Farm, (3) Agronomy Farm, and (4) Research Park. The SDRs are connected to Tower Mounted Boosters (TMBs) made up of power amplifier and low noise amplifier and supports n77 TDD. The connection is via low attenuation AVA5-50 RF cables from CommScope. The TMB is connected to a CommScope antenna via LMR400 jumper cables. The radios and front-ends operate within 3400–3600 MHz frequency band. Each BS is equipped with three sectors with three USRP N320s connected to a single COTS server. That is, three concurrent experiments can be performed at each base station using virtualization via Docker containers running the 5G stack. Detailed specification for the equipment mentioned above is highlighted below. The SDRs are connected to Dell servers via high speed 10G SFP+ interfaces.

Similar to BSes, the User Equipment in AraRAN are realized using NI USRP B210 radios. The UEs are distributed across Ames in different regions including Central Ames, Curtiss Farm, Kitchen Farm, and Agronomy Farm for both agricultural and commercial use cases. This gives the experimenter the opportunity to perform practical experiment. The UEs are equipped with software defined radios from National Instrument and corresponding RF front-ends. The USRPs have board-mounted GPSDO for synchronization purposes and are connected to a COTs server to support experimentation. Our UEs are also equipped with NI B205 USRPs for spectrum monitoring purposes. Currently our UE provides Internet connectivity support to real users around the city of Ames.

Detailed specifications of SDR BS and UE are provided here.

Skylark Base Station and Customer Premises Equipment

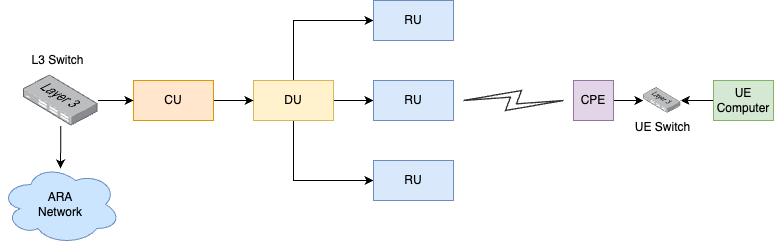

Skylark base station of AraRAN consists of three components Central Units (CU), Distributed Units (DUs), and Radio Units (RUs). In the downlink, CU connects to the DU which in turn connects to the RU. On the other hand, CU connects to a Layer-3 (L3) switch which acts as the gateway.

The customer premises equipment is equipped with 1x Gigabit Ethernet port for connecting it to the Power over Ethernet (PoE) switch. The switch provides power as well as network connectivity to the CPE. A computer is attached to the switch which is in the same VLAN as the CPE. The computer gets an IP address from the L3 switch at the BS while the link from CPE to RU-DU-CU acts as an L2 link. The logical diagram of the Skylark deployment is provided below.

In Phase-1, ARA consists of a single Skylark BS deployed at Wilson Hall. The figure below shows the snapshot of the field deployment of Skylark BS. We plan to have additional five BSes in subsequent phases. As far as CPEs are concerned, ARA deploy 22 CPEs in Phase-1 which spread across different regions around Ames.

Phase-1 envisions 22 CPEs connected to the Skylark BS. There are 3x RUs at the BS each covering a sector of 120 degrees. The three RUs are connected to the DU which in turn connected to the CU. As mentioned above, each UE computer deployed the field with CPE attached gets an IP address from the L3 switch connected to the CU at the BS.

The ARA Resource Specification provides detailed specification of Skylark, the APIs are documented in the Skylark API section and the Experiments are documented in the Skylark Experiments.

Ericsson Base Stations and COTS User Equipment

ARA employs Commercial-Off-The-Shelf (COTS) 5G massive MIMO systems from Ericsson where each base station is equipped with the following radios.

COTS 5G SA Massive MIMO with 3D Beamforming and NR-DC

mid-band radio: Ericsson AIR 6419 operating in the n77 5G band (3.45 - 3.55 GHz) with 192 antennas, supporting up to 16 layers of MU-MIMO.

mmWave radio: Ericsson AIR 5322 operating in the n261 frequency band (27.5 - 27.9 GHz) with 256 antennas, supporting up to 8 layers of MU-MIMO.

Along with four base station in Phase-1, ARA also deploys 20+ field deployed UE stations equipped with the Quectel RG530 module which uses 4 Transmit and Receive (TRX) antennas for the mid-band n77 and 4 TRX antennas for the mmWave.

ARA COTS systems operate in the 5G Stand-Alone (SA) mode and support New Radio Dual Connectivity (NR-DC) with the mmWave carrier anchored at mid-band carrier, providing an aggregate communication capacity up to 3+ Gbps for a single user, and enabling advanced wireless applications such as agriculture automation. Through first-of-its-kind configuration and measurement APIs, the ARA COTS system enables users to collect extensive real-world measurement data about 5G massive MIMO systems at scale, thus enabling integrative wireless studies and application pilots.

Backhaul Network

The backhaul network in ARA, we call AraHaul, consists of long-range wireless and free space optical links along with traditional optical fiber.

Micro and Millimeter Wave Backhaul

For AraHaul, we use Aviat WTM 4800 radios for long-range micro and millimeter wireless links connecting different base station sites. For Phase-1, backhaul wireless link is established between Wilson Hall and Agronomy Farm. The wireless link uses microwave (11 GHz) and mmWave (80 GHz) frequency bands. The WTM 4800 radios are equipped with one or two transceivers configured for a variety of operational modes including single transceiver single-band, dual-transceiver single-band, and dual transceiver multi-band. Detailed specification of Aviat radios is described here.

Free Space Optical Backhaul

In parallel to mmWave and microwave Aviat link, AraHaul realizes a long-range Free Space Optical Communication (FSOC) link. The link is established using custom made optical telescopes that uses laser of frequency 194THz. For Phase-1, the optical link is realized between WilsonHall and Agronomy Farm. The FSOC module is installed and is currently under testing. Detailed specification of FSOC system is described here.

Fiber Backhaul

The ARA wired backhaul network is divided into two: (1) data network and (2) management network. The data center and the four field sites are connected via both data and management networks. The network connectivity is established using two types of switches for each type of the network, i.e., each site is equipped with separate switches for managing data and management network.

At the data center, we use Juniper ACX 7100 as the data switch while the field deployed BS sites use Juniper ACX 710. For management network, we use Cisco 9300 at the data center and Cisco 3850 at the field deployed BS sites. The four field deployed BS sites have a point to point connectivity with data center via 100G/40G fiber cables. Each switch (data/management) acts as a gateway for local networks connected to consisting of devices such as servers and radios. The Open Shortest Path First (OSPF) routing protocol is used between the switches to ensure network reachability.

Compute

Beside the compute resource at the BS or UE computer deployed at the site, ARA is equipped with two high performance compute nodes at the data center. The dedicated compute nodes are realized using Dell PowerEdge R750 servers equipped with Intel(R) Xeon(R) Gold 63xx CPUs, 384 GB of memory, and 1.92 TB of storage space.

Storage

Similar to compute resources, ARA provides users object storage service for permanent storage of data. For realizing the object storage, we use two dedicated Dell PowerEdge R750 storage servers with Intel(R) Xeon(R) Gold 5317 processor, 128 GB memory, and 14.6 TB of storage. The disks are configured with RAID 5 configuration for redundancy and fault resilience.

Weather Sensors

ARA offers users to perform experiments to analyze the impact of weather conditions on the wireless communication systems. For sensing the weather in real-time, we install generic weather stations (providing parameters including temperature, pressure, humidity, rain rate, and wind speed) as well as disdrometers to sense fine-grained rain-related parameters (including precipitation type, rain intensity, drop size distribution, and drop velocity). The weather sensors are installed at two base station sites, Wilson Hall and Agronomy Farm as shown in the following figure. Detailed specification of weather station and the related APIs are provided in ARA Weather APIs.